(This is probably the first part of, probably, a five part blog series on twitter analytics using Python. Make sure to check out the other posts and I'll post a wrap up blog post that will point to all the posts in the series)

(Yes there are lots of other examples out there, but I've put these notes together as a reminder for myself and a particular project I'm testing)

In this first blog post I will look at what you need to do get get your self setup for analysing Tweets, to harvest tweets and to do some basics. These are covered in the following five steps.

Step 1 – Setup your Twitter Developer Account & Codes

Before you can start writing code you need need to get yourself setup with Twitter to allow you to download their data using the Twitter API.

To do this you need to register with Twitter. To do this go to apps.twitter.com. Log in using your twitter account if you have one. If not then you need to go create an account.

Next click on the Create New App button.

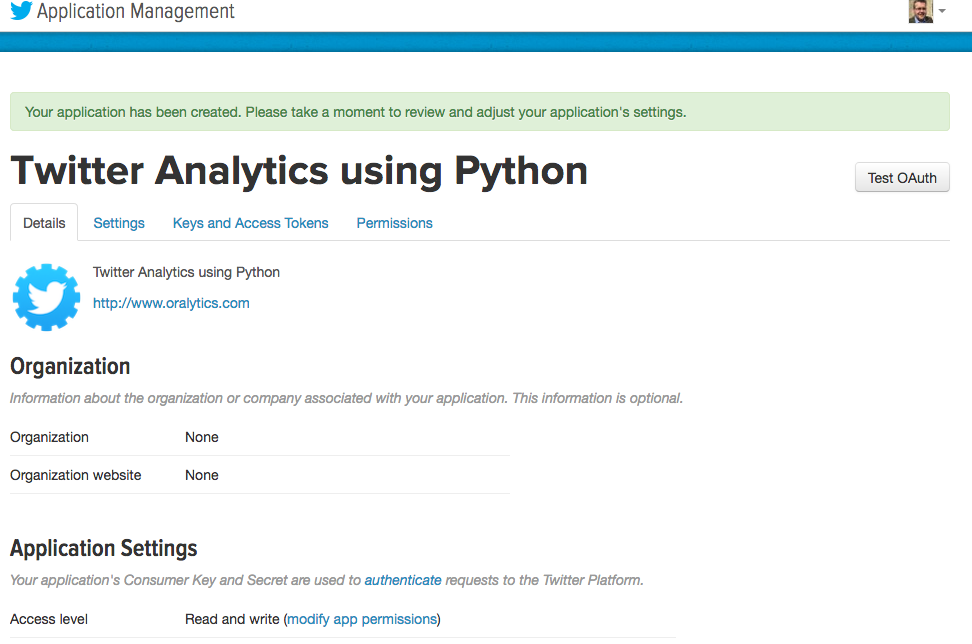

Then give the Name of your app (Twitter Analytics using Python), a description, a webpage link (eg your blog or something else), click on the 'add a Callback URL' button and finally click the check box to agree with the Developer Agreement. Then click the 'Create your Twitter Application' button.

You will then get a web page like the following that contains lots of very important information. Keep the information on this page safe as you will need it later when creating your connection to Twitter.

The details contained on this web page (and below what is shown in the above image) will allow you to use the Twitter REST APIs to interact with the Twitter service.

Step 2 – Install libraries for processing Twitter Data

As with most languages there is a bunch of code and libraries available for you to use. Similarly for Python and Twitter. There is the Tweepy library that is very popular. Make sure to check out the Tweepy web site for full details of what it will allow you to do.

To install Tweepy, run the following.

pip3 install tweepy

It will download and install tweepy and any dependencies.

Step 3 – Initial Python code and connecting to Twitter

You are all set to start writing Python code to access, process and analyse Tweets.

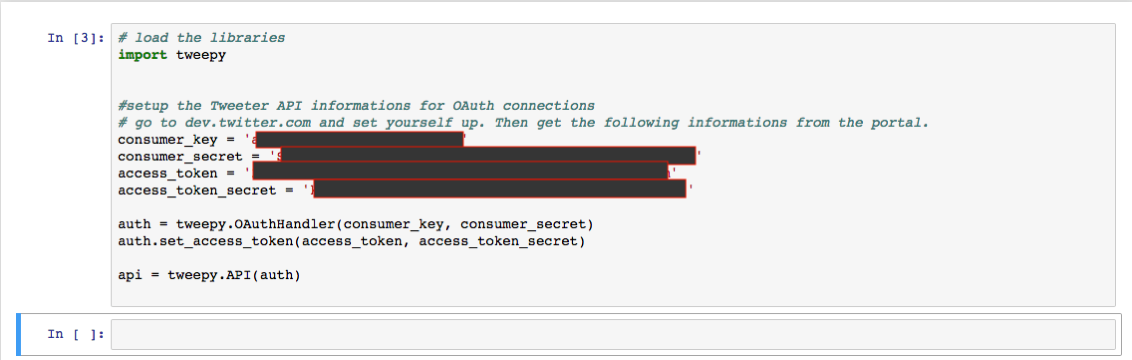

The first thing you need to do is to import the tweepy library. After that you will need to use the important codes that were defined on the Twitter webpage produced in Step 1 above, to create an authorised connection to the Twitter API.

After you have filled in your consumer and access token values and run this code, you will not get any response.

Step 4 – Get User Twitter information

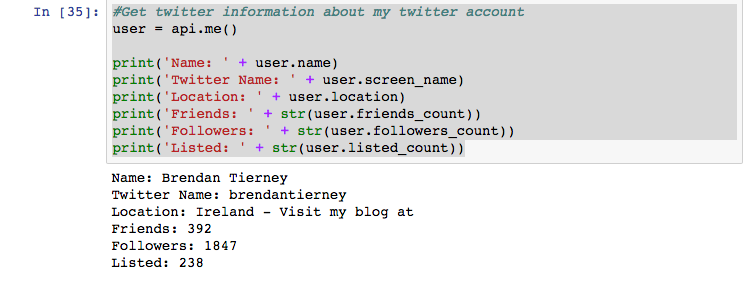

The easiest way to start exploring twitter is to find out information about your own twitter account. There is a API function called 'me' that gathers are the user object details from Twitter and from there you can print these out to screen or do some other things with them. The following is an example about my Twitter account.

#Get twitter information about my twitter account

user = api.me()

print('Name: ' + user.name)

print('Twitter Name: ' + user.screen_name)

print('Location: ' + user.location)

print('Friends: ' + str(user.friends_count))

print('Followers: ' + str(user.followers_count))

print('Listed: ' + str(user.listed_count))

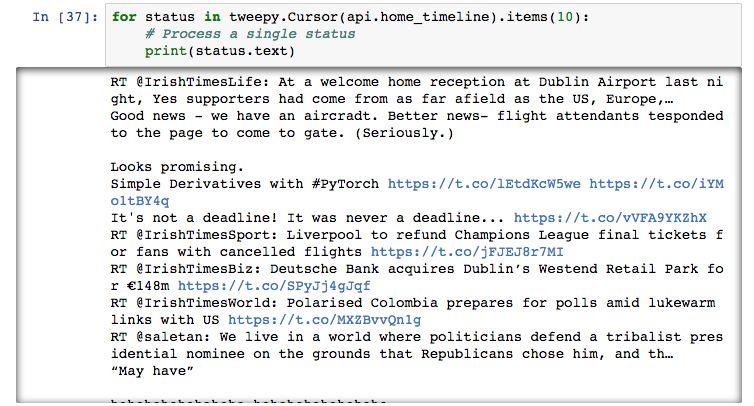

You can also start listing the last X number of tweets from your timeline. The following will take the last 10 tweets.

for tweets in tweepy.Cursor(api.home_timeline).items(10):

# Process a single status

print(tweets.text)

An alternative is, that returns only 20 records, where the example above can return X number of tweets.

public_tweets = api.home_timeline()

for tweet in public_tweets:

print(tweet.text)

Step 5 – Get Tweets based on a condition

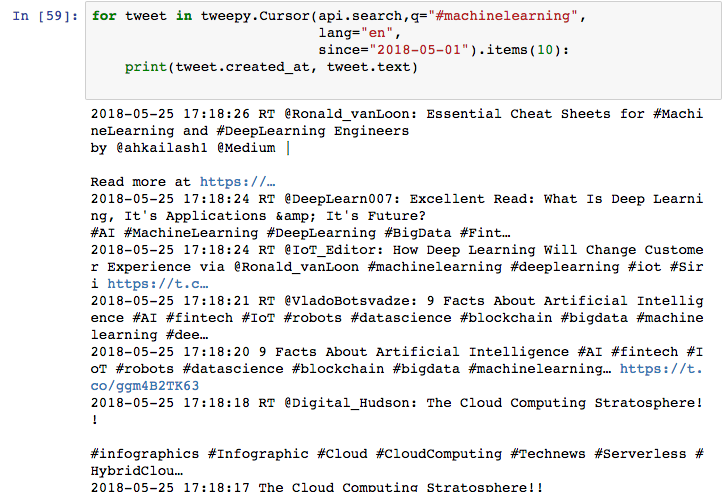

Tweepy comes with a Search function that allows you to specify some text you want to search for. This can be hash tags, particular phrases, users, etc. The following is an example of searching for a hash tag.

for tweet in tweepy.Cursor(api.search,q="#machinelearning",

lang="en",

since="2018-05-01").items(10):

print(tweet.created_at, tweet.text)

You can apply additional search criteria to include restricting to a date range, number of tweets to return, etc

Check out the other blog posts in this series of Twitter Analytics using Python.

Start the discussion at forums.toadworld.com