The momentum in the adoption of DevOps practices appears to be growing as more organisations see the benefits of increased operational efficiency, shorter release cycles, increased quality through fewer defects and lower risk of application outages.

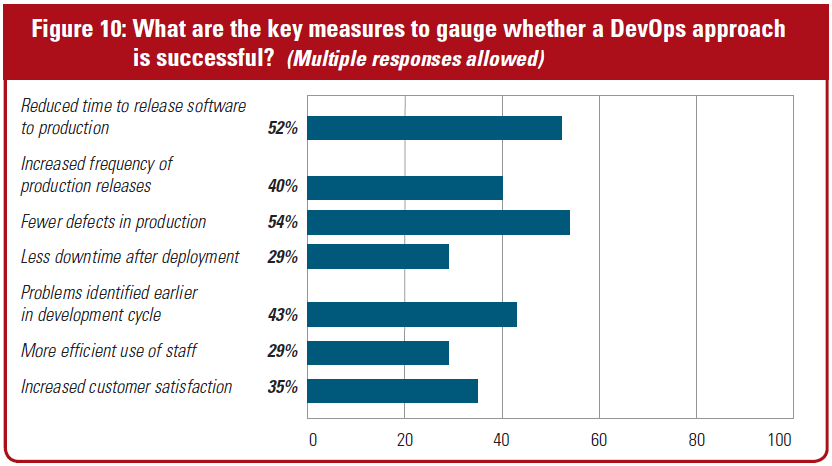

According to a 2016 survey conducted by Unisphere and sponsored by Quest Software entitled “The Current State and Adoption of DevOps”, there are various measures by which the adoption of a DevOps approach is considered successful.

Source: “The Current State and Adoption of DevOps” – Unisphere Research/Quest Software

Figure 1: How do you measure whether DevOps is successful?

According to most companies who presented at some of this year’s UK based DevOps conferences, such as Continuous Lifecycle and the DevOps Enterprise Summit in London, successfully embracing DevOps is as much to do with the acceptance of cultural change; with the necessary breakdown of traditional organisational silos and changes to business process, as it is to do with technology. Tooling alone is not sufficient to accomplish what is a massive change for a lot of companies.

When it comes to bringing database changes into the DevOps pipeline, that’s where it gets a little tricky; particularly as companies try to replicate what they may already be doing on the application side. Unit testing and static code review in particular are well established processes for Java developers and the like and application teams have been using automated build processes for years, but with database development, this rarely exists.

According to a recent Redgate survey entitled “The State of Database DevOps” just 49% of those companies practicing Continuous Integration included databases into that process and 53% when it comes to Continuous Deployment.

So let’s analyse some of the different considerations that companies have to make in order to successfully implement such a bold initiative.

People

The adoption of DevOps requires a top-to-bottom commitment within the organisation and the breaking down of many traditional boundaries such as development, operations and others.

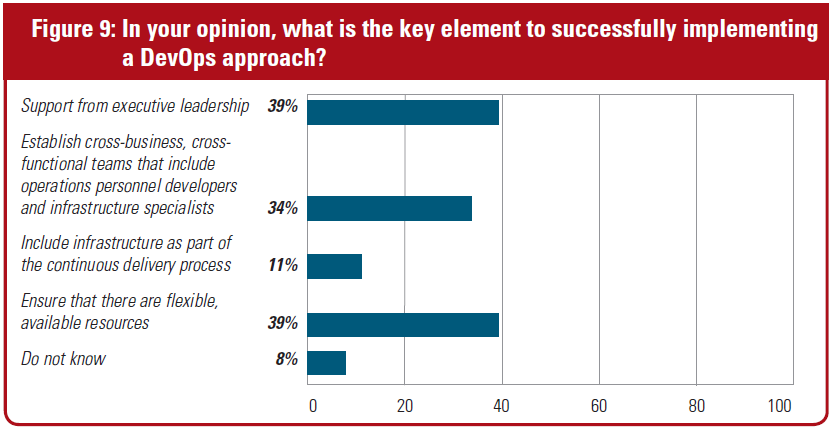

According to the 2016 Unisphere/Quest survey mentioned above, 39% of respondents cited support from executive leadership as the key element in successfully implementing DevOps.

Source: “The Current State and Adoption of DevOps” – Unisphere Research/Quest Software

Figure 2: What is the key element in successfully implementing DevOps?

Engagements with companies attending the recent DevOps conferences (above) validated that people are indeed an essential part to embracing DevOps.

There were many discussions regarding the use of “tribes” which are groups of individuals within each product area with representations from many functional groups (dev, test, QA, operations, risk management, etc) to streamline the delivery process. Some organisations have already made a start:

According to the same Unisphere/Quest survey, 74% of respondents said that their organisation already had database developers and DBAs working together on the same team to facilitate application development.

Processes

Most companies building applications with a database backend don’t have a reliable, repeatable process for unit testing database code and don’t perform objective, standardised code reviews. In our experience of working with many Toad customers who are faced with having to implement database development into their existing automated processes, this is the first major hurdle they encounter.

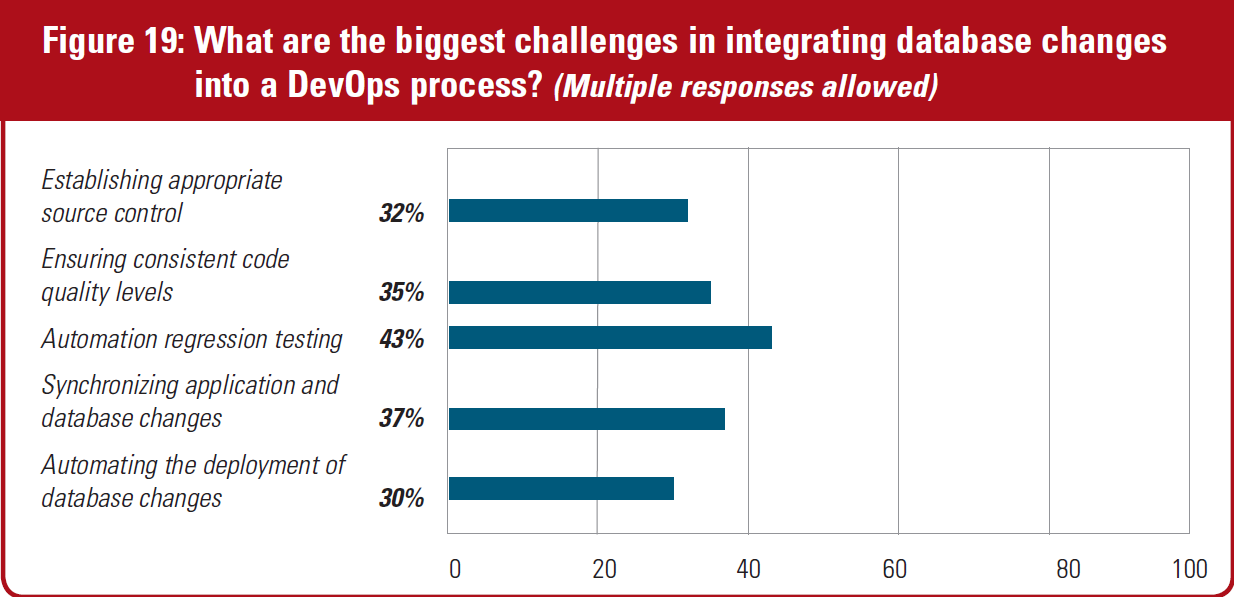

Again, according to the same survey, there were considered to be multiple challenges in bringing database changes into DevOps:

Source: “The Current State and Adoption of DevOps” – Unisphere Research/Quest Software

Figure 3: The biggest challenges in integrating DB changes into DevOps

In my experience, most companies that have reasonably large development teams already use source control. However, most don’t realise that there is more to do to make this more effective as part of an automated build process where you need to store more than just code changes.

As you can see in Figure 3, expecting mainstream database developers to suddenly start creating unit tests is the biggest challenge, particularly for Oracle PL/SQL code which can be quite complex; often using advanced datatypes. Trying to perform this type of testing as part of existing application testing (JUnit, Ruby, etc) isn’t really viable so you need a dedicated PL/SQL testing solution.

As in application regression testing, test failures need to be reported within the build process with a feedback loop to development to address the issues before the build can be deployed.

Static code reviews are traditionally very subjective, lack depth and incomplete and therefore require a different approach if it’s to play an effective part of an automated build process. We need a rules-based approach which can be applied consistently across different code and the ability to define a minimum quality threshold below which code is considered to have failed.

Technology

Technology plays a key role in successful DevOps adoption and while many organisations already have some IT infrastructure in place which supports version control, Continuous Integration and Continuous Deployment within their application teams, database teams are typically not a part of this.

The problem with bringing in database changes into DevOps is that the state of the database is continually changing, thereby presenting a “moving target” which can hinder successful deployments. Processes such as regression testing and code review need to be automated in the same way they are in application development.

In order to play an effective part within an automated build process using technologies such as Jenkins, Bamboo, TeamCity, etc, database unit testing and code reviews really need to be callable functions – again, in the same way they are for application development.

Toad for Oracle Developer Edition has all the necessary functions to enable Oracle database development to start to become an essential part of your DevOps pipeline:

- Team Coding – integrates with most proprietary source control providers to enable developers to seamlessly work on database objects and have the VCS files synchronised automatically

- PL/SQL unit testing – whether you want to follow Test Driven Development or simply create unit tests as you run your code, Code Tester for Oracle enables a suite of test to be created quickly and stored in a repository. Tests can also be checked into source control so they can become a part of an automated build process.

- Static code review – select from a library of nearly 200 rules in Code Analysis which look at all aspects of PL/SQL code development practices so you can apply rules consistently across all your code.

- SQL optimisation – getting optimal performance from your SQL and PL/SQL code quickly and effectively in development makes for lower cost and risk as code makes its way through the DevOps pipeline. SQL Optimizer automatically generates rewrites in seconds and lets you choose the best alternative.

- Database, Schema and Data Compare – the fast, effective way to compare database configurations, schema objects and table data across multiple environments and build a synchronisation script for deployment.

Later this year, we will be announcing a brand new Toad product which will provide the ability to call PL/SQL unit tests and code reviews on code checked into source control as a part of an automated build process using Jenkins, Hudson, Bamboo and many others.

In order to automate the deployment of build artifacts into other parts of the delivery pipeline such as QA, you will also have the ability to compare objects between source and target (including data) and deploy changes to the target database.

For more information on some of the topics discussed above, take a few minutes to listen to this interview with The Register’s Senior Editor, Gavin Clarke and myself on 20th June in London.

https://whitepapers.theregister.co.uk/paper/view/5825/whats-holding-back-database-teams-in-devops

Start the discussion at forums.toadworld.com