As more and more companies try to move to an Agile process for their application development, ways to automate testing has become a popular question we get. How to automate Benchmark Factory testing? Either to fit into a build process, using Jenkins for example, or just a way to execute jobs using home-grown scripts. Pre-version 7.6 BMF has provided a COM interface which allowed some basic operations like running an existing job, changing a jobs connection, etc. The COM interface was limited and did not allow for the creation of new jobs/tests/connections. This limited it usefulness. But now with Benchmark Factory 7.6 we have provided a REST interface which allows not only the same functionality as the COM interface, but also allows a user to create/modify/delete jobs/tests/connections. In the next few blogs I will go over the REST API and show some examples. So let’s get started!

The BMF REST API is available via http requests to port 30100. So for an example of a simple GET;

http://<bmfmachine>:30100/api/jobs

This will return the XML of all the jobs currently loaded into the BMF jobs view.

So a couple of things to know. First the BMF Console needs to be running in order for the REST API to work. There currently is no BMF server process. Next, the REST port number cannot be changed and if you are going from one machine to another, you may need to poke a hole in any firewall between the two machines for port 30100.

So now on to the current REST structure.

Jobs (GP)

Job (GDU)

Schedule (GU)

Tests (GP)

Test (GDU)

Connections (GP)

Connection (GDU)

TestRuns (G)

TestRun (GDU) (Update comment only)

Connection (G)

Test (G)

G = GET (retrieves the objects XML representation)

P = POST (Adds a new object based on the XML supplied in the HTTP data area)

U = PUT (Updates the object to the value(s) supplied in the XML supplied in the HTTP data area)

D = DELETE (Deletes the object)

Most of the GET operations are straight forward so I will not go through those, but let’s go through adding a connection and a job, which uses that new connection.

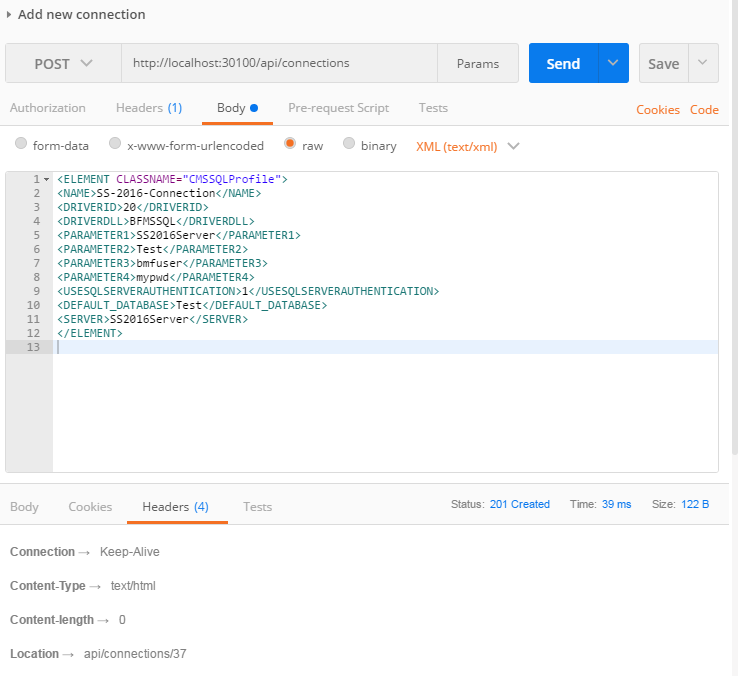

To add a new connection we will do a POST to the connections collection like shown in the below screenshot.

As you can see the information provide is fairly straight forward. After you run this command you should get a return code of 201, which is the HTTP status code for Created. Also the response header will have the URL for the resource you just added. This is a zero based index of the new connection, but I prefer to access objects using the name versus an index. So to get the newly created connection I would use;

http://localhost:30100/api/connections/SS-2016-Connection

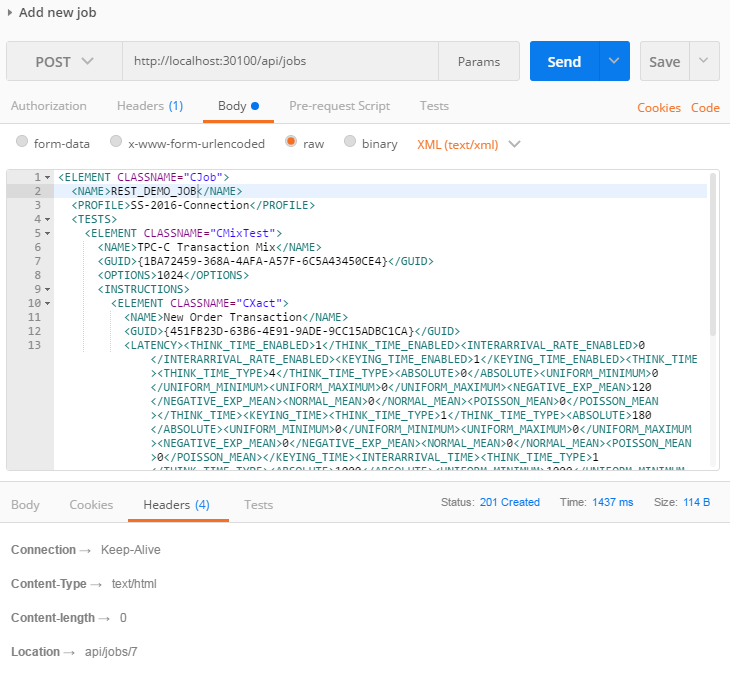

Now that we have a new connection, let’s add a new job and have it use this newly created connection.

As you can see from the above screenshot that, when compared with a GET of a job, there is quite a bit of XML values not specified. For most values for objects there are default values, so unless you need to override the default value, you don’t need to specify in the XML of the job to be created. Notice that just like when adding a connection, if all goes well you will get a return code of 201 and a location header of the newly created job. Again I recommend using the jobs name instead of index to avoid problems with others adding/removing jobs which change the index.

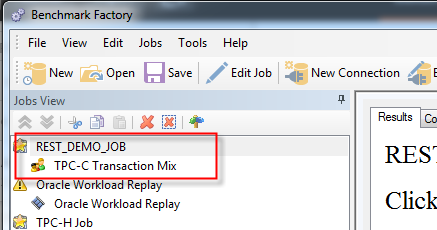

Looking at the BMF Console you can see the newly created job.

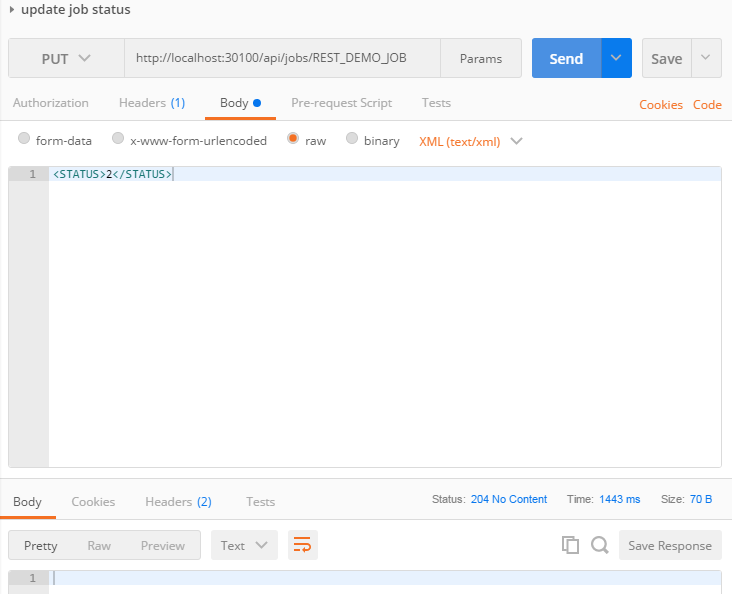

Now the last thing for us to do is to run the job. To do this we just need to change the job’s status to running. To do that we just need to do a HTTP put of the job’s status property like shown below.

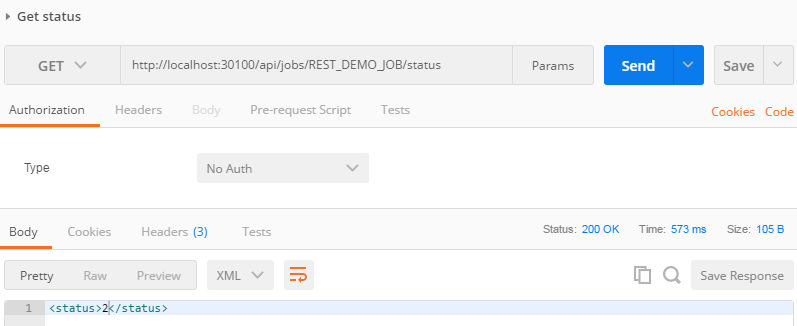

To check the status we just need to do a get on the jobs status like;

As you see the returned status is 2, which is running. Here are the status values;

Complete = 1

Running = 2

Ready = 3

Hold = 4

Stopping = 5

And that is all you need to do to quickly add a new connection and job and execute the job using the new BMF REST API. In part 2 of this series I will talk about how to perform common modifications which might be done as part automated test executions. Please tell us what you think in the comments section below!

Start the discussion at forums.toadworld.com