Indexing data for fast and efficient retrieval is one of the data preparation tasks in data science. MongoDB is the most commonly used NoSQL database and the fourth most commonly used database (relational or NoSQL). MongoDB is based on the BSON (binary JSON) document model.

Problem

MongoDB provides built-in full-text search capabilities but does not provide advanced indexing and search features.

Solution

Apache Solr is not designed to be primarily a data store, but is designed for indexing documents. Apache Solr is based on the high performance, full-featured text search engine Lucene. MongoLabs provides a Mongo Connector; using it, you can index MongoDB data in Apache Solr.

In this article we shall use the Mongo Connector to index MongoDB data in Apache Solr.

Setting the Environment

We have used Linux OS environment on Amazon Elastic Compute Cloud (EC2) for this article. The following software is required for this article.

-Apache Solr

-MongoDB

-Mongo Connector

-Java

Create a directory /solr to install the software and set its permissions to global (777).

mkdir /solr

chmod 777 /solr

cd /solr

Download and extract the Apache Solr tgz file.

wget http://apache.mirror.vexxhost.com/lucene/solr/5.3.1/solr-5.3.1.tgz

tar -xvf solr-5.3.1.tgz

Download and extract the MongoDB tgz file.

curl -O http://downloads.mongodb.org/linux/ mongodb-linux-i686-3.0.6.tgz

tar -zxvf mongodb-linux-i686-3.0.6.tgz

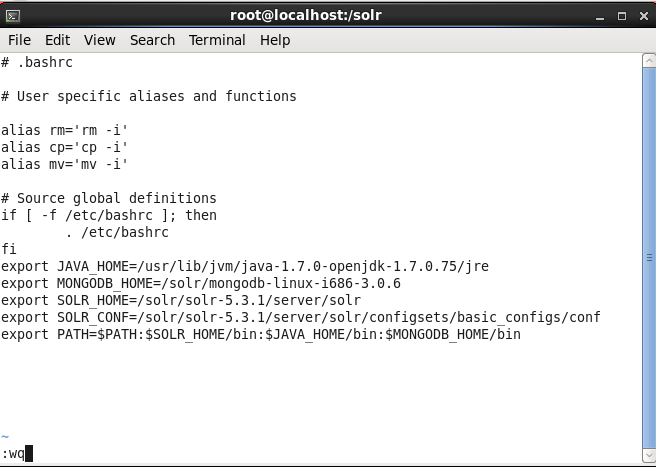

Set environment variables for MongoDB, Apache Solr and Java in the bash script.

vi ~/.bashrc

export MONGODB_HOME=/solr/mongodb-linux-i686-3.0.6

export JAVA_HOME=/usr/lib/jvm/java-1.7.0-openjdk-1.7.0.75/jre

export SOLR_HOME=/solr/solr-5.3.1/server/solr

export SOLR_CONF=/solr/solr-5.3.1/server/solr/configsets/basic_configs/conf

export PATH=$PATH:$SOLR_HOME/bin:$JAVA_HOME/bin:$MONGODB_HOME/bin

The bash script ~/.bashrc is shown in vi.

Installing the Mongo Connector

To install the Mongo Connector run the following command.

pip install mongo-connector

The mongo-connector-2.1 gets installed.

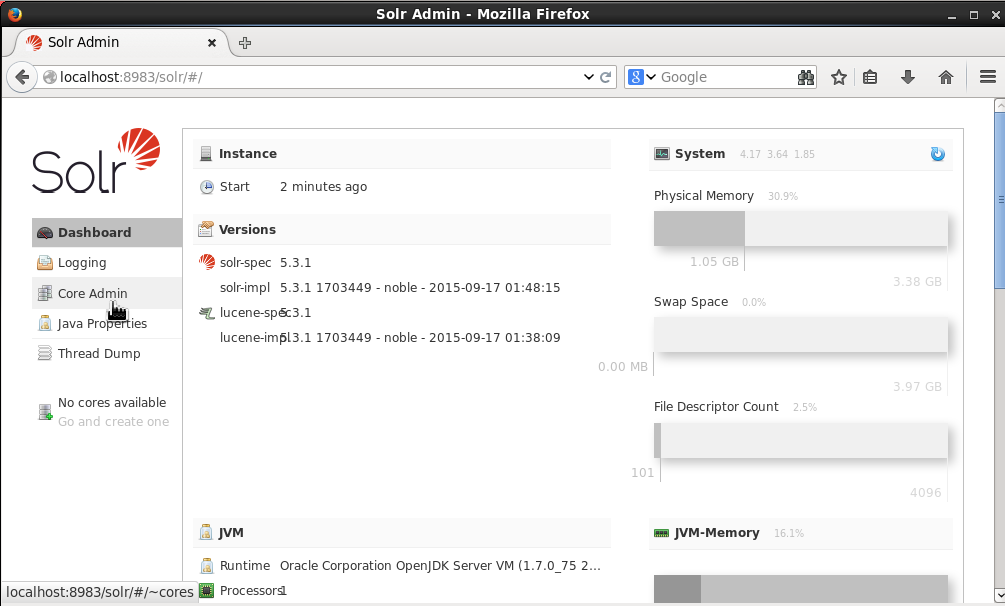

Starting Apache Solr Server

Start the Apache Solr server from the /solr/solr-5.3.1 directory. Run the bin/solr start command to start Apache Solr.

cd /solr/solr-5.3.1

bin/solr start

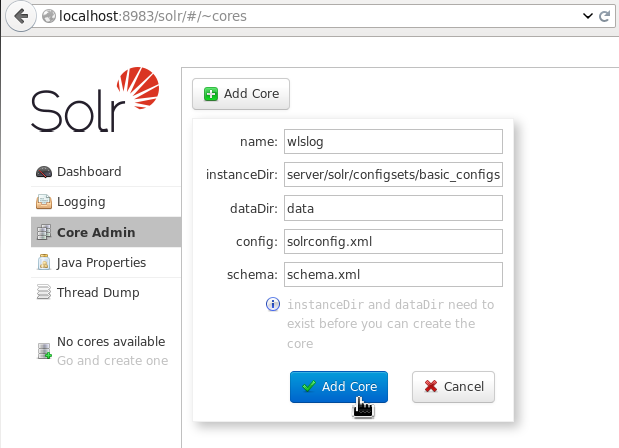

Creating a Solr Core

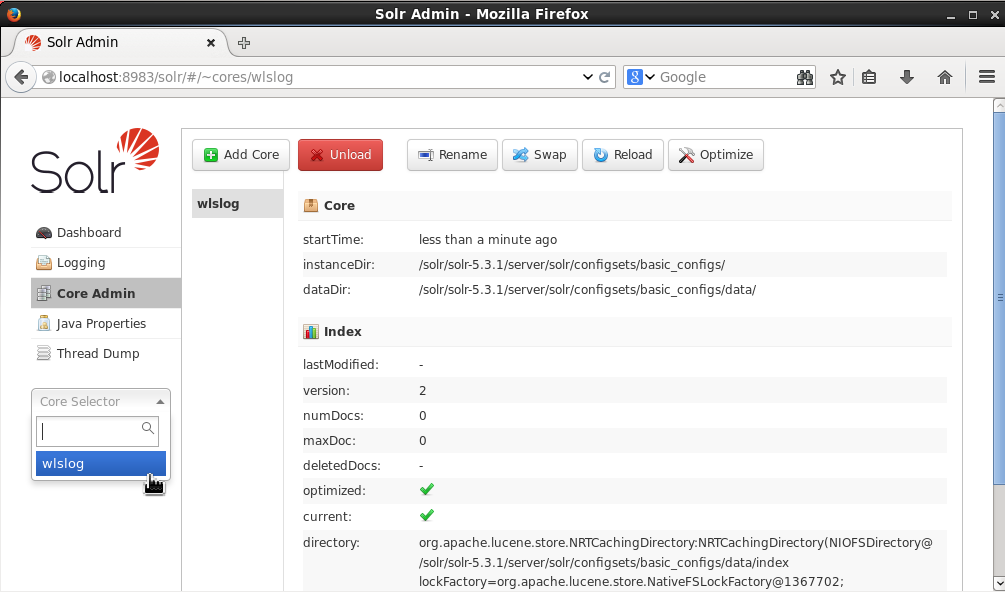

Login to the Solr Admin Console with the URL http://localhost:8983/solr/. We need to create a Solr Core to index MongoDB data. Select Core Admin in Solr Admin Dashboard.

In the dialog displayed specify a name (wlslog for example) for the core. Specify the instanceDir as /solr/solr-5.3.1/server/solr/configsets/basic_configs. Specify dataDir as “data”. The instanceDir and the dataDir must exist before a core is created. Specify the config as solrconfig.xml and schema as schema.xml. Click on Add Core.

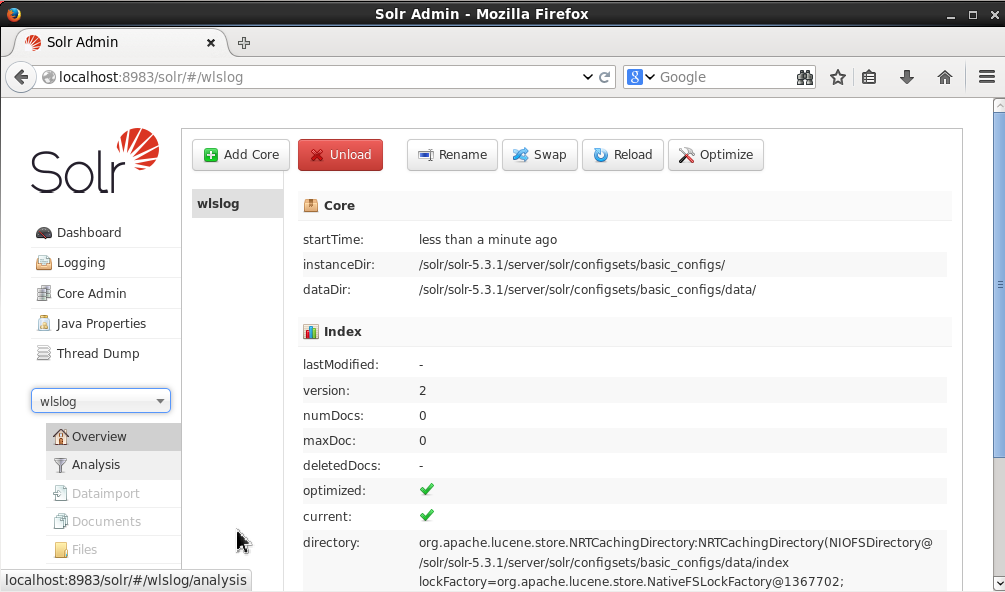

A Solr core called wlslog gets created.

Select the wlslog core in the Core Selector.

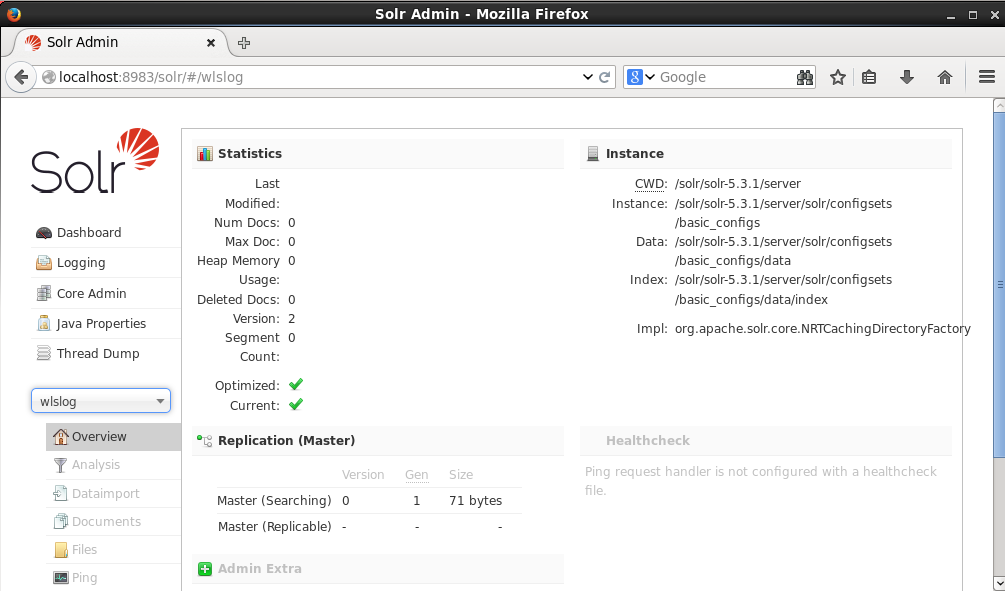

The Overview tab displays the Statistics and Instance associated with the wlslogcore.

Configuring Apache Solr

Next, we need to configure Apache Solr. The fields in the MongoDB documents to be indexed are specified in the schema.xml configuration file. Open the schema.xml in a vi editor.

vi /solr/solr-5.3.1/server/solr/configsets/basic_configs/conf/schema.xml

Add fields time_stamp,category,type,servername,code, and msg. Mongo Connector also stores the metadata associated with the each MongoDB document it indexes in fields ns and _ts. Also add the ns and _ts fields to the schema.xml.

<?xml version="1.0" encoding="UTF-8" ?>

<schema name="example" version="1.5">

<field name="time_stamp" type="string" indexed="true" stored="true" multiValued="false" />

<field name="category" type="string" indexed="true" stored="true" multiValued="false" />

<field name="type" type="string" indexed="true" stored="true" multiValued="false" />

<field name="servername" type="string" indexed="true" stored="true" multiValued="false" />

<field name="code" type="string" indexed="true" stored="true" multiValued="false" />

<field name="msg" type="string" indexed="true" stored="true" multiValued="false" />

<field name="_ts" type="long" indexed="true" stored="true" />

<field name="ns" type="string" indexed="true" stored="true"/>

<field name="_version_" type="long" indexed="true" stored="true"/>

<field name="id" type="string" indexed="true" stored="true" required="true" multiValued="false" />

<uniqueKey>id</uniqueKey>

<fieldType name="string" class="solr.StrField" sortMissingLast="true" />

<fieldType name="boolean" class="solr.BoolField" sortMissingLast="true"/>

</schema>

Fields not defined in schema.xml are not indexed. We also need to configure the org.apache.solr.handler.admin.LukeRequestHandler request handler in thesolrconfig.xml. Requests to Solr server are routed through the request handler. Open the solrconfig.xml in the vi editor.

vi ./solr-5.3.1/server/solr/configsets/basic_configs/conf/solrconfig.xml

Specify the request handler for the Mongo Connector.

<requestHandler name="/admin/luke" class="org.apache.solr.handler.admin.LukeRequestHandler" />

Also configure the auto commit to true so that Solr auto commits the data from MongoDB after the configured time.

<autoCommit>

<maxTime>15000</maxTime>

<openSearcher>true</openSearcher>

</autoCommit>

After modifying the schema.xml and solrconfig.xml the Solr server needs to be restarted.

bin/solr restart

Starting MongoDB Server

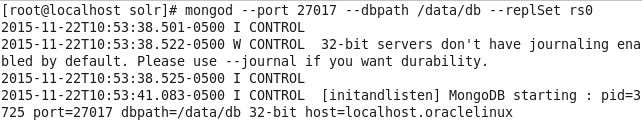

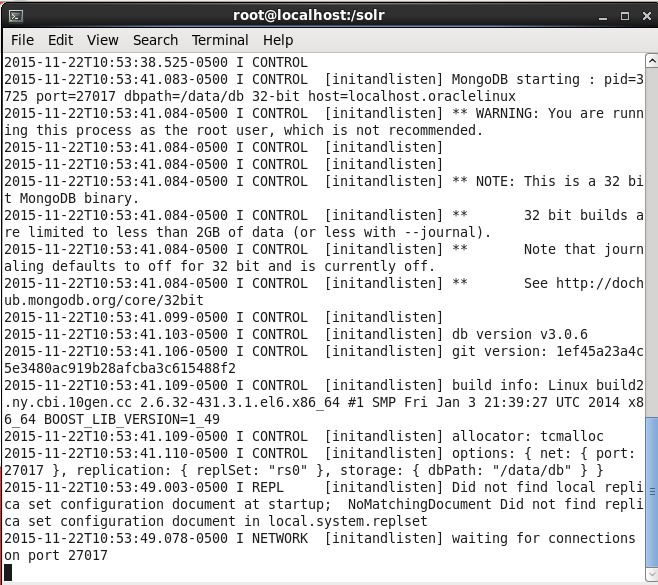

Mongo Connector requires a MongoDB replica set to be running for it to index MongoDB data in Solr. A replica set is a cluster of MongoDB servers that implements replication and automatic failover. A replica set could consist of only a single server, as we shall use. Start a single instance of MongoDB server using the following command in which the port is specified as 27017, the data directory for MongoDB is specified as /data/dband the replica set name is specified as rs0with the –replSetoption.

mongod --port 27017 --dbpath /data/db --replSet rs0

Run the preceding command to start MongoDB.

MongoDB replica set gets started.

Starting MongoDB Shell

Start the MongoDB shell with the following command.

mongo

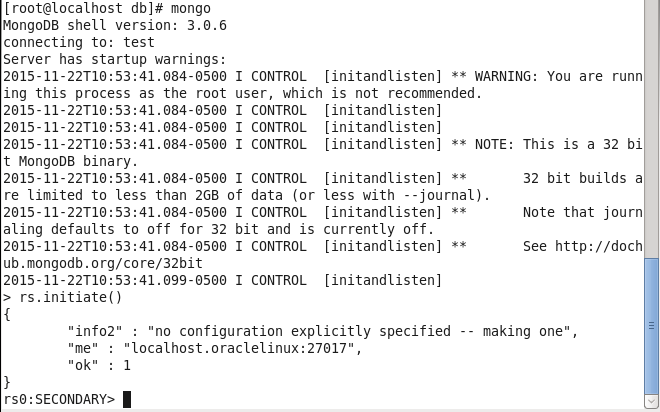

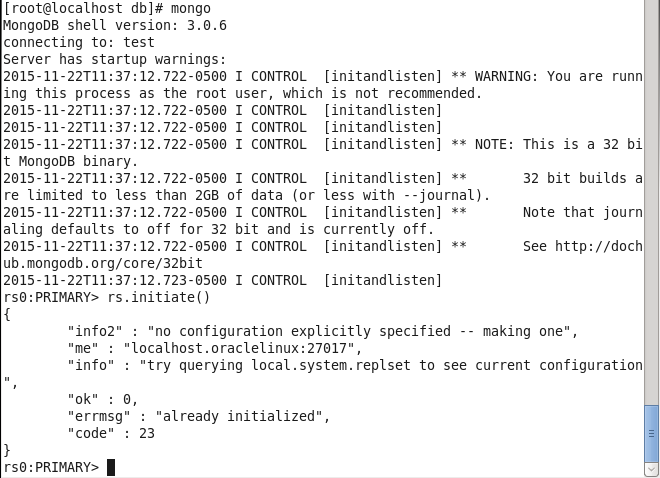

MongoDB shell gets started. We need to initiate the replica set. Run the following command to initiate the replica set.

rs.initiate()

Replica set gets initiated.

If the replica set has already been initialized and thers.initiate() command is run an error message gets output that replica set has been “already initialized”.

Creating a MongoDB Collection

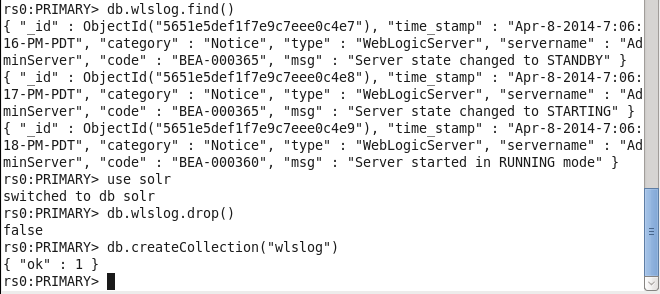

In this section we shall create a MongoDB collection to store documents. Set the MongoDB database to “solr”, which is created implicitly when a new database is initialized by invoking some command on it.

use solr

We shall store MongoDB documents in a collection called “wlslog”. First, find if the collection already exists and does it have documents in it with the following command.

db.wlslog.find()

If some documents get listed the collection, wlslog exists and has documents in it. Drop the wlslog collection with the following command.

db.wlslog.drop()

Create the wlslog collection again with the following command.

db.createCollection("wlslog")

The wlslog collection gets created.

Adding Data to MongoDB Collection

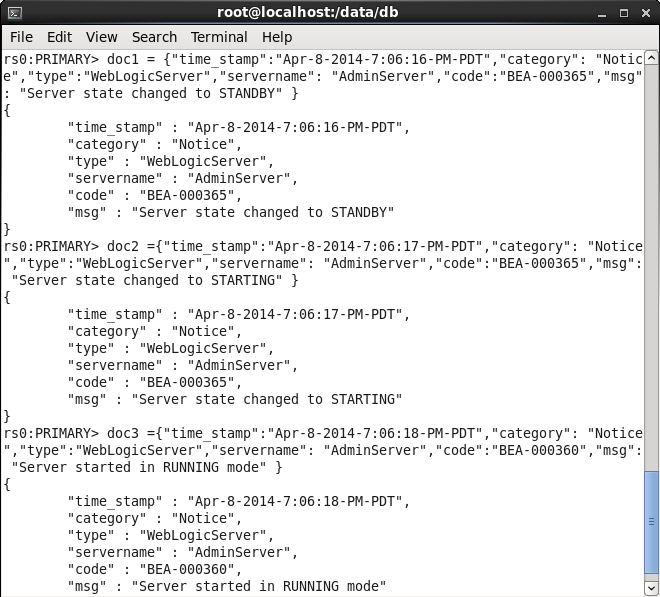

Next, we shall add some documents to the wlslog collection. Create JSON for three documents doc1, doc2, and doc3.

doc1 = {"time_stamp":"Apr-8-2014-7:06:16-PM-PDT","category": "Notice","type":"WebLogicServer",

"servername": "AdminServer","code":"BEA-000365","msg": "Server state changed to STANDBY" }

doc2 ={"time_stamp":"Apr-8-2014-7:06:17-PM-PDT","category": "Notice","type":"WebLogicServer",

"servername": "AdminServer","code":"BEA-000365","msg": "Server state changed to STARTING" }

doc3 ={"time_stamp":"Apr-8-2014-7:06:18-PM-PDT","category": "Notice","type":"WebLogicServer",

"servername": "AdminServer","code":"BEA-000360","msg": "Server started in RUNNING mode" }

Three documents get created.

Add the three documents to MongoDB with the following command:

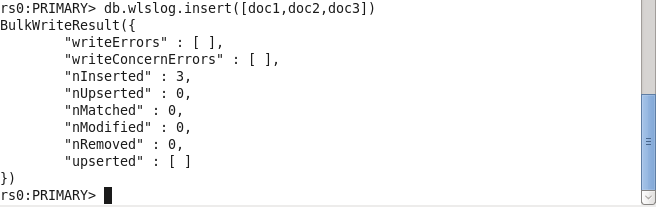

db.wlslog.insert([doc1,doc2,doc3])

The three documents get added. The nInserted value of 3 indicates that 3 documents have been added.

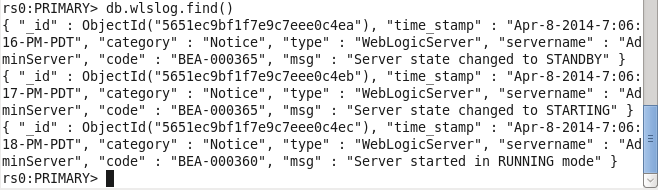

Query the wlslog collection with the find() method.

db.wlslog.find()

The three documents get listed.

Starting the Mongo Connector and Indexing MongoDB Data

Start the Mongo Connector to connect to MongoDB and Apache Solr and index the MongoDB data. The Mongo Connector is run with the mongo-connectorcommand. Specify the following command parameters.

|

Parameter |

Description |

Value |

|

–unique-key |

The unique key in Solr server. The unique key in MongoDB documents is _id and is mapped to the id key in Solr. |

id |

|

–n |

The MongoDB collection to index. The format is database.collection. |

solr.wlslog |

|

-m |

The MongoDB host and port to connect to. |

localhost:27017 |

|

-t |

The Apache Solr URL including the core. |

http://localhost:8983/solr/wlslog |

|

-d |

The document manager for Apache Solr. |

solr_doc_manager |

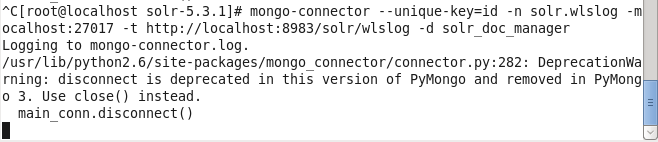

With both the MongoDB and Apache Solr running run the mongo-connector command to index MongoDB data in Apache Solr.

mongo-connector --unique-key=id –n solr.wlslog -m localhost:27017 -t http://localhost:8983/solr/wlslog -d solr_doc_manager

The Mongo Connector gets started.

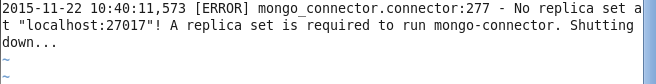

A MongoDB replica set must be running and if not the following error message gets generated.

Querying Apache Solr

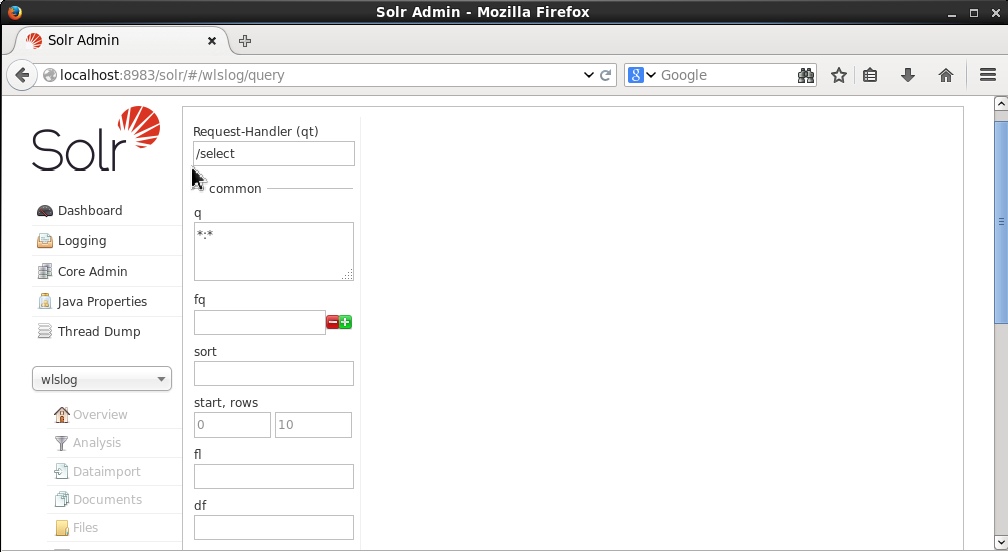

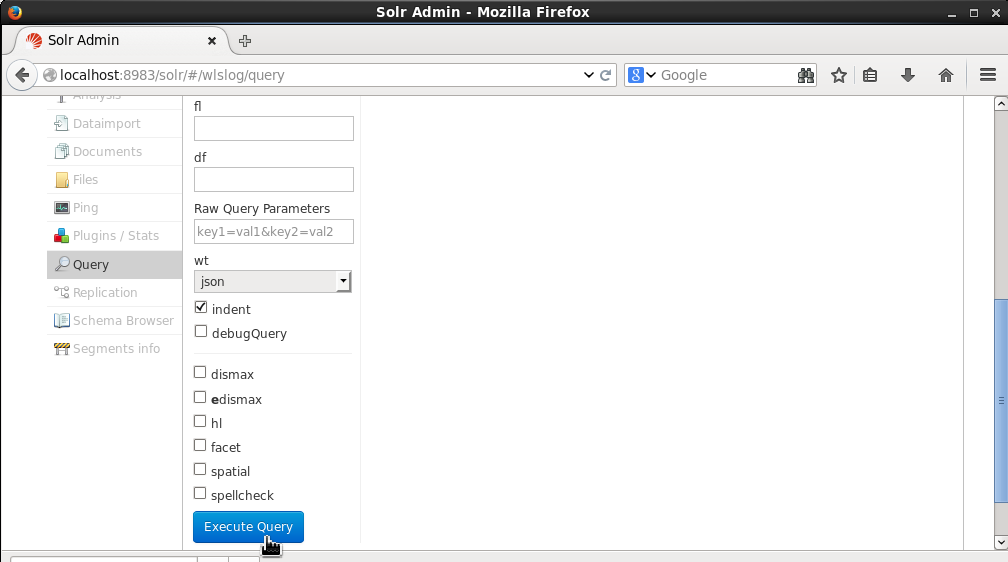

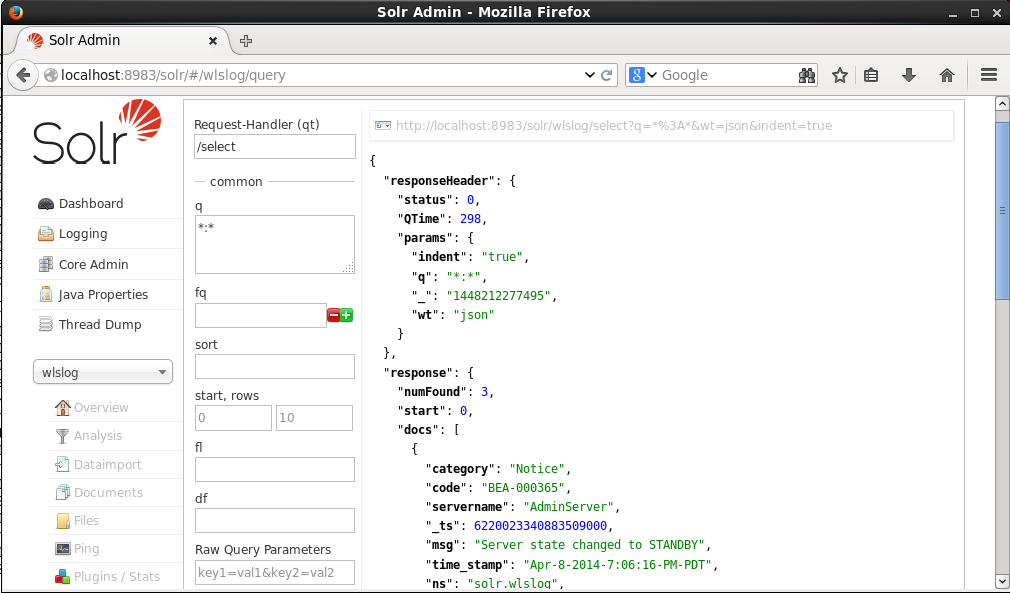

Having indexed MongoDB data in Solr navigate to the http://localhost:8983/solr/#/wlslog/query URL in the Solr Admin Console. Alternative select Query for the wlslog core. The request Handler is/select and the query (q) is *:*.

Click on Execute Query.

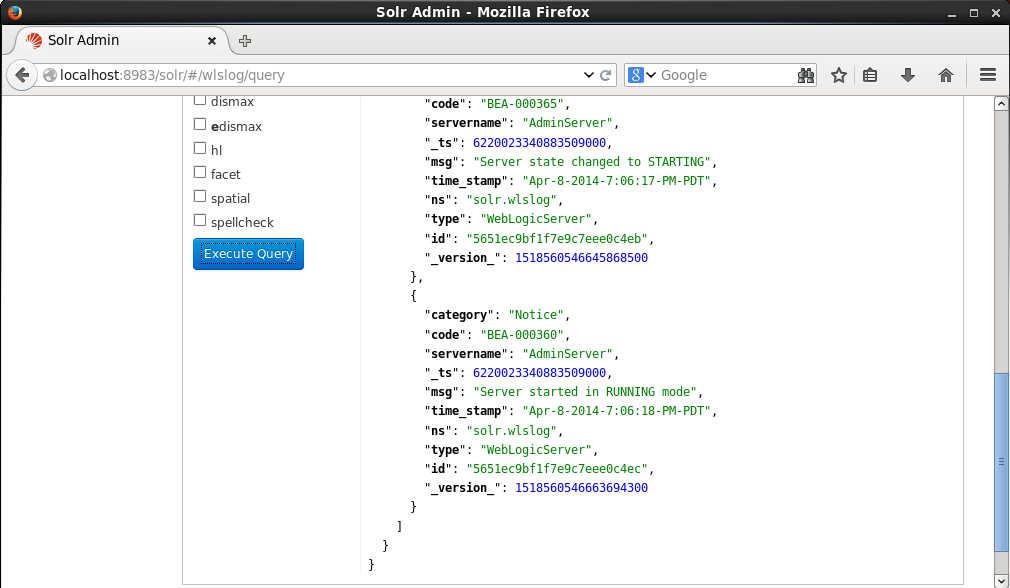

The three documents indexed in Solr from MongoDB get listed.

Each document has the ns, _ts and _version_ added by the document manager.

Summary

In this article we used the Mongo Connector to index MongoDB data in Apache Solr. The MongoDB server must be running as a replica set. The Mongo Connector LukeRequestHandler must be configured in Solr server’s solrconfig.xml. In addition to the fields required to be indexed the ns and _ts fields must be configured in schema.xml.

Hi,

I am facing serverselectiontimeouterror pymongo error, even after following the steps. Could you suggest some solution ?

Regards,

Sudarshan

Are the same versions for Apache Solr and MongoDB used? Please post detailed error message.

Hi,

I want to index fields from different mongo collections as single document in solr using mongo connector. After following the steps mentioned above for each id one document has been created in solr. So how to collect fields from different collection and to make one single document on solr?

The mongo connector only indexes from one collection at a time. To aggregate fields from different documents, first create a new collection with fields from different collections and index the collection as asingle document.